Floating point

In computing, floating point describes a system for representing numbers that would be too large or too small to be represented as integers. Numbers are in general represented approximately to a fixed number of significant digits and scaled using an exponent. The base for the scaling is normally 2, 10 or 16. The typical number that can be represented exactly is of the form:

- significant digits × baseexponent

The term floating point refers to the fact that the radix point (decimal point, or, more commonly in computers, binary point) can "float"; that is, it can be placed anywhere relative to the significant digits of the number. This position is indicated separately in the internal representation, and floating-point representation can thus be thought of as a computer realization of scientific notation. Over the years, several different floating-point representations have been used in computers; however, for the last ten years the most commonly encountered representation is that defined by the IEEE 754 Standard.

The advantage of floating-point representation over fixed-point (and integer) representation is that it can support a much wider range of values. For example, a fixed-point representation that has seven decimal digits with two decimal places, can represent the numbers 12345.67, 123.45, 1.23 and so on, whereas a floating-point representation (such as the IEEE 754 decimal32 format) with seven decimal digits could in addition represent 1.234567, 123456.7, 0.00001234567, 1234567000000000, and so on. The floating-point format needs slightly more storage (to encode the position of the radix point), so when stored in the same space, floating-point numbers achieve their greater range at the expense of precision.

The speed of floating-point operations is an important measure of performance for computers in many application domains. It is measured in FLOPS.

Overview

A number representation (called a numeral system in mathematics) specifies some way of storing a number that may be encoded as a string of digits. The arithmetic is defined as a set of actions on the representation that simulate classical arithmetic operations.

There are several mechanisms by which strings of digits can represent numbers. In common mathematical notation, the digit string can be of any length, and the location of the radix point is indicated by placing an explicit "point" character (dot or comma) there. If the radix point is omitted then it is implicitly assumed to lie at the right (least significant) end of the string (that is, the number is an integer). In fixed-point systems, some specific assumption is made about where the radix point is located in the string. For example, the convention could be that the string consists of 8 decimal digits with the decimal point in the middle, so that "00012345" has a value of 1.2345.

In scientific notation, the given number is scaled by a power of 10 so that it lies within a certain range—typically between 1 and 10, with the radix point appearing immediately after the first digit. The scaling factor, as a power of ten, is then indicated separately at the end of the number. For example, the revolution period of Jupiter's moon Io is 152853.5047 seconds. This is represented in standard-form scientific notation as 1.528535047 × 105 seconds.

Floating-point representation is similar in concept to scientific notation. Logically, a floating-point number consists of:

- A signed digit string of a given length in a given base (or radix). This is known as the significand, or sometimes the mantissa (see below) or coefficient. The radix point is not explicitly included, but is implicitly assumed to always lie in a certain position within the significand—often just after or just before the most significant digit, or to the right of the rightmost digit. This article will generally follow the convention that the radix point is just after the most significant (leftmost) digit. The length of the significand determines the precision to which numbers can be represented.

- A signed integer exponent, also referred to as the characteristic or scale, which modifies the magnitude of the number.

The significand is multiplied by the base raised to the power of the exponent, equivalent to shifting the radix point from its implied position by a number of places equal to the value of the exponent—to the right if the exponent is positive or to the left if the exponent is negative.

Using base-10 (the familiar decimal notation) as an example, the number 152853.5047, which has ten decimal digits of precision, is represented as the significand 1528535047 together with an exponent of 5 (if the implied position of the radix point is after the first most significant digit, here 1). To recover the actual value, a decimal point is placed after the first digit of the significand and the result is multiplied by 105 to give 1.528535047 × 105, or 152853.5047. In storing such a number, the base (10) need not be stored, since it will be the same for all numbers used, and can thus be inferred. It could as easily be written 1.528535047 E 5 (and sometimes is), where "E" is taken to mean "multiplied by ten to the power of", as long as the convention is known to all parties.

Symbolically, this final value is

where s is the value of the significand (after taking into account the implied radix point), b is the base, and e is the exponent.

Equivalently, this is:

where s here means the integer value of the entire significand, ignoring any implied decimal point, and p is the precision—the number of digits in the significand.

Historically, different bases have been used for representing floating-point numbers, with base 2 (binary) being the most common, followed by base 10 (decimal), and other less common varieties such as base 16 (hexadecimal notation). Floating point numbers are rational numbers because they can be represented as one integer divided by another. The base however determines the fractions that can be represented. For instance 1/5 cannot be represented exactly as a floating point number using a binary base but can be represented exactly using a decimal base.

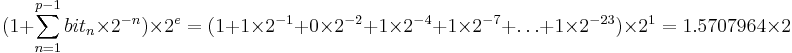

The way in which the significand, exponent and sign bits are internally stored on a computer is implementation-dependent. The common IEEE formats are described in detail later and elsewhere, but as an example, in the binary single-precision (32-bit) floating-point representation p=24 and so the significand is a string of 24 bits (1s and 0s). For instance, the number π's first 33 bits are 11001001 00001111 11011010 10100010 0. Rounding to 24 bits in binary mode means attributing the 24th bit the value of the 25th which yields 11001001 00001111 11011011. When this is stored using the IEEE 754 encoding, this becomes the significand s with e = 1 (where s is assumed to have a binary point to the right of the first bit) after a left-adjustment (or normalization) during which leading or padding zeros are truncated should there be any. Note that they do not matter anyway. Then since the first bit of a non-zero binary significand is always 1 it need not be stored, giving an extra bit of precision. To calculate π the formula is

where n is the normalized significand's nth bit from the left. Normalization, which is reversed when 1 is being added above, can be thought of as a form of compression; it allows a binary significand to be compressed into a field one bit shorter than the maximum precision, at the expense of extra processing.

The word "mantissa" is often used as a synonym for significand. Many people do not consider this usage to be correct, because the mantissa is traditionally defined as the fractional part of a logarithm, while the characteristic is the integer part. This terminology comes from the way logarithm tables were used before computers became commonplace. Log tables were actually tables of mantissas. Therefore, a mantissa is the logarithm of the significand.

Some other computer representations for non-integral numbers

Floating-point representation, in particular the standard IEEE format, is by far the most common way of representing an approximation to real numbers in computers because it is efficiently handled in most large computer processors. However, there are alternatives:

- Fixed-point representation uses integer hardware operations controlled by a software implementation of a specific convention about the location of the binary or decimal point, for example, 6 bits or digits from the right. The hardware to manipulate these representations is less costly than floating-point and is also commonly used to perform integer operations. Binary fixed point is usually used in special-purpose applications on embedded processors that can only do integer arithmetic, but decimal fixed point is common in commercial applications.

- Binary-coded decimal is an encoding for decimal numbers in which each digit is represented by its own binary sequence.

- Where greater precision is desired, floating-point arithmetic can be implemented (typically in software) with variable-length significands (and sometimes exponents) that are sized depending on actual need and depending on how the calculation proceeds. This is called arbitrary-precision arithmetic.

- Some numbers (e.g., 1/3 and 0.1) cannot be represented exactly in binary floating-point no matter what the precision. Software packages that perform rational arithmetic represent numbers as fractions with integral numerator and denominator, and can therefore represent any rational number exactly. Such packages generally need to use "bignum" arithmetic for the individual integers.

- Computer algebra systems such as Mathematica and Maxima can often handle irrational numbers like

or

or  in a completely "formal" way, without dealing with a specific encoding of the significand. Such programs can evaluate expressions like "

in a completely "formal" way, without dealing with a specific encoding of the significand. Such programs can evaluate expressions like " " exactly, because they "know" the underlying mathematics.

" exactly, because they "know" the underlying mathematics. - A representation based on natural logarithms is sometimes used in FPGA-based applications where most arithmetic operations are multiplication or division.[1] Like floating-point representation, this solution has precision for smaller numbers, as well as a wide range.

Range of floating-point numbers

By allowing the radix point to be adjustable, floating-point notation allows calculations over a wide range of magnitudes, using a fixed number of digits, while maintaining good precision. For example, in a decimal floating-point system with three digits, the multiplication that humans would write as

- 0.12 × 0.12 = 0.0144

would be expressed as

- (1.2 × 10−1) × (1.2 × 10−1) = (1.44 × 10−2).

In a fixed-point system with the decimal point at the left, it would be

- 0.120 × 0.120 = 0.014.

A digit of the result was lost because of the inability of the digits and decimal point to 'float' relative to each other within the digit string.

The range of floating-point numbers depends on the number of bits or digits used for representation of the significand (the significant digits of the number) and for the exponent. On a typical computer system, a 'double precision' (64-bit) binary floating-point number has a coefficient of 53 bits (one of which is implied), an exponent of 11 bits, and one sign bit. Positive floating-point numbers in this format have an approximate range of 10−308 to 10308 (because 308 is approximately 1023 × log10(2), since the range of the exponent is [−1022,1023]). The complete range of the format is from about −10308 through +10308 (see IEEE 754).

The number of normalized floating point numbers in a system F(B, P, L, U) (where B is the base of the system, P is the precision of the system to P numbers, L is the smallest exponent representable in the system, and U is the largest exponent used in the system) is: 2 * (B - 1) * B^(P-1) * (U - L + 1).

There is a smallest positive normalized floating-point number, Underflow level = UFL = B^L which has a 1 as the leading digit and 0 for the remaining digits of the mantissa, and the smallest possible value for the exponent.

There is a largest floating point number, Overflow level = OFL = B^(U + 1) * (1 - B^(-P)) which has B - 1 as the value for each digit of the mantissa and the largest possible value for the exponent.

In addition there are representable values strictly between −UFL and UFL. Namely, zero and negative zero, as well as subnormal numbers.

History

In 1938, Konrad Zuse of Berlin completed the "Z1", the first mechanical binary programmable computer. It worked with 22-bit floating-point numbers having a 7-bit exponent, 15-bit significand (including one implicit bit), and a sign bit. The memory used sliding metal parts to store 64 such numbers. The Z3, completed in 1941, implemented floating point arithmetic exceptions with representations for plus and minus infinity and undefined.

Once electronic digital computers became a reality, the need to process data in this way was quickly recognized. The first commercial computer to be able to do this in hardware appears to be the Z4, designed in 1942-1945, followed by the IBM 704 in 1954. For some time after that, floating-point hardware was an optional feature, and computers that had it were said to be "scientific computers", or to have "scientific computing" capability. All modern general-purpose computers now have this ability.

The UNIVAC 1100/2200 series, introduced in 1962, supported two floating-point formats. Single precision used 36 bits, organized into a 1-bit sign, 8-bit exponent, and a 27-bit significand. Double precision used 72 bits organized as a 1-bit sign, 11-bit exponent, and a 60-bit significand. The IBM 7094, introduced the same year, also supported single and double precision, with slightly different formats.

Prior to the IEEE-754 standard, computers used many different forms of floating-point. These differed in the word-sizes, the format of the representations, and the rounding behavior of operations. These differing systems implemented different parts of the arithmetic in hardware and software, with varying accuracy.

The IEEE-754 standard was created in the early 1980s, after word sizes of 32 bits (or 16 or 64) had been generally settled upon. Among the innovations are these:

- A precisely specified encoding of the bits, so that all compliant computers would interpret bit patterns the same way. This made it possible to transfer floating-point numbers from one computer to another.

- A precisely specified behavior of the arithmetic operations. This meant that a given program, with given data, would always produce the same result on any compliant computer. This helped reduce the almost mystical reputation that floating-point computation had for seemingly nondeterministic behavior.

- The ability of exceptional conditions (overflow, divide by zero, etc.) to propagate through a computation in a benign manner and be handled by the software in a controlled way.

IEEE 754: floating point in modern computers

The IEEE has standardized the computer representation for binary floating-point numbers in IEEE 754. This standard is followed by almost all modern machines. Notable exceptions include IBM mainframes, which support IBM's own format (in addition to the IEEE 754 binary and decimal formats), and Cray vector machines, where the T90 series had an IEEE version, but the SV1 still uses Cray floating-point format.

| Floating point precisions |

|---|

|

IEEE 754: |

The standard provides for many closely-related formats, differing in only a few details. Five of these formats are called basic formats, and two of these are especially widely used in computer hardware and languages:

- Single precision, called "float" in the C language family, and "real" or "real*4" in Fortran. This is a binary format that occupies 32 bits (4 bytes) and its significand has a precision of 24 bits (about 7 decimal digits).

- Double precision, called "double" in the C language family, and "double precision" or "real*8" in Fortran. This is a binary format that occupies 64 bits (8 bytes) and its significand has a precision of 53 bits (about 16 decimal digits).

The other basic formats are quadruple precision (128-bit) binary, as well as decimal floating point (64-bit) and "double" (128-bit) decimal floating point.

Less common formats include:

- Extended precision format, 80-bit floating point value. Sometimes "long double" is used for this in the C language family, though "long double" may be a synonym for "double" or may stand for quadruple precision.

- Half, also called float16, a 16-bit floating point value.

Any integer with absolute value less than or equal to 224 can be exactly represented in the single precision format, and any integer with absolute value less than or equal to 253 can be exactly represented in the double precision format. Furthermore, a wide range of powers of 2 times such a number can be represented. These properties are sometimes used for purely integer data, to get 53-bit integers on platforms that have double precision floats but only 32-bit integers.

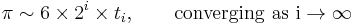

The standard specifies some special values, and their representation: positive infinity (+∞), negative infinity (−∞), a negative zero (−0) distinct from ordinary ("positive") zero, and "not a number" values (NaNs).

Comparison of floating-point numbers, as defined by the IEEE standard, is a bit different from usual integer comparison. Negative and positive zero compare equal, and every NaN compares unequal to every value, including itself. Apart from these special cases, more significant bits are stored before less significant bits. All values except NaN are strictly smaller than +∞ and strictly greater than −∞.

To a rough approximation, the bit representation of an IEEE binary floating-point number is proportional to its base 2 logarithm, with an average error of about 3%. (This is because the exponent field is in the more significant part of the datum.) This can be exploited in some applications, such as volume ramping in digital sound processing.

Although the 32 bit ("single") and 64 bit ("double") formats are by far the most common, the standard actually allows for many different precision levels. Computer hardware (for example, the Intel Pentium series and the Motorola 68000 series) often provides an 80 bit extended precision format, with a 15 bit exponent, a 64 bit significand, and no hidden bit.

There is controversy about the failure of most programming languages to make these extended precision formats available to programmers (although C and related programming languages usually provide these formats via the long double type on such hardware). System vendors may also provide additional extended formats (e.g. 128 bits) emulated in software.

A project for revising the IEEE 754 standard was started in 2000 (see IEEE 754 revision); it was completed and approved in June 2008. It includes decimal floating-point formats and a 16 bit floating point format ("binary16"). binary16 has the same structure and rules as the older formats, with 1 sign bit, 5 exponent bits and 10 trailing significand bits. It is being used in the NVIDIA Cg graphics language, and in the openEXR standard.[2]

Internal representation

Floating-point numbers are typically packed into a computer datum as the sign bit, the exponent field, and the significand (mantissa), from left to right. For the IEEE 754 binary formats they are apportioned as follows:

| Type | Sign | Exponent | Significand | Total bits | Exponent bias | Bits precision | |

|---|---|---|---|---|---|---|---|

| Half (IEEE 754-2008) | 1 | 5 | 10 | 16 | 15 | 11 | |

| Single | 1 | 8 | 23 | 32 | 127 | 24 | |

| Double | 1 | 11 | 52 | 64 | 1023 | 53 | |

| Quad | 1 | 15 | 112 | 128 | 16383 | 113 |

While the exponent can be positive or negative, in binary formats it is stored as an unsigned number that has a fixed "bias" added to it. Values of all 0s in this field are reserved for the zeros and subnormal numbers, values of all 1s are reserved for the infinities and NaNs. The exponent range for normalized numbers is [−126, 127] for single precision, [−1022, 1023] for double, or [−16382, 16383] for quad. Normalised numbers exclude subnormal values, zeros, infinities, and NaNs.

In the IEEE binary interchange formats the leading 1 bit of a normalized significand is not actually stored in the computer datum. It is called the "hidden" or "implicit" bit. Because of this, single precision format actually has a significand with 24 bits of precision, double precision format has 53, and quad has 113.

For example, it was shown above that π, rounded to 24 bits of precision, has:

- sign = 0 ; e = 1 ; s = 110010010000111111011011 (including the hidden bit)

The sum of the exponent bias (127) and the exponent (1) is 128, so this is represented in single precision format as

- 0 10000000 10010010000111111011011 (excluding the hidden bit) = 40490FDB [1] as a hexadecimal number.

Special values

Signed zero

In the IEEE 754 standard, zero is signed, meaning that there exist both a "positive zero" (+0) and a "negative zero" (-0). In most run-time environments, positive zero is usually printed as "0", while negative zero may be printed as "-0". The two values behave as equal in numerical comparisons, but some operations return different results for +0 and −0. For instance, 1/(−0) returns negative infinity (exactly), while 1/+0 returns positive infinity (exactly); these two operations are however accompanied by "divide by zero" exception. A sign symmetric arccot operation will give different results for +0 and −0 without any exception. The difference between +0 and −0 is mostly noticeable for complex operations at so-called branch cuts.

Subnormal numbers

Subnormal values fill the underflow gap with values where the absolute distance between them are the same as for adjacent values just outside of the underflow gap. This is an improvement over the older practice to just have zero in the underflow gap, and where underflowing results were replaced by zero (flush to zero).

Modern floating point hardware usually handles subnormal values (as well as normal values), and does not require software emulation for subnormals.

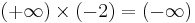

Infinities

The infinities of the extended real number line can be represented in IEEE floating point datatypes, just like ordinary floating point values like 1, 1.5 etc. They are not error values in any way, though they are often (but not always, as it depends on the rounding) used as replacement values when there is an overflow. Upon a divide by zero exception, a positive or negative infinity is returned as an exact result. An infinity can also be introduced as a numeral (like C's "INFINITY" macro, or " " if the programming language allows that syntax).

" if the programming language allows that syntax).

IEEE 754 requires infinities to be handled in a reasonable way, such as

- there is no meaningful thing to do

- there is no meaningful thing to do

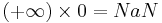

NaNs

IEEE 754 specifies a special value called "Not a Number" (NaN) to be returned as the result of certain "invalid" operations, such as 0/0, ∞×0, or sqrt(-1). There are actually two kinds of NaNs, signalling and quiet. Using a signalling NaN in any arithmetic operation (including numerical comparisons) will cause an "invalid" exception. Using a quiet NaN merely causes the result to be NaN too.

The representation of NaNs specified by the standard has some unspecified bits that could be used to encode the type of error; but there is no standard for that encoding. In theory, signalling NaNs could be used by a runtime system to extend the floating-point numbers with other special values, without slowing down the computations with ordinary values. Such extensions do not seem to be common, though.

Representable numbers, conversion and rounding

By their nature, all numbers expressed in floating-point format are rational numbers with a terminating expansion in the relevant base (for example, a terminating decimal expansion in base-10, or a terminating binary expansion in base-2). Irrational numbers, such as π or √2, or non-terminating rational numbers, must be approximated. The number of digits (or bits) of precision also limits the set of rational numbers that can be represented exactly. For example, the number 123456789 clearly cannot be exactly represented if only eight decimal digits of precision are available.

When a number is represented in some format (such as a character string) which is not a native floating-point representation supported in a computer implementation, then it will require a conversion before it can be used in that implementation. If the number can be represented exactly in the floating-point format then the conversion is exact. If there is not an exact representation then the conversion requires a choice of which floating-point number to use to represent the original value. The representation chosen will have a different value to the original, and the value thus adjusted is called the rounded value.

Whether or not a rational number has a terminating expansion depends on the base. For example, in base-10 the number 1/2 has a terminating expansion (0.5) while the number 1/3 does not (0.333...). In base-2 only rationals with denominators that are powers of 2 (such as 1/2 or 3/16) are terminating. Any rational with a denominator that has a prime factor other than 2 will have an infinite binary expansion. This means that numbers which appear to be short and exact when written in decimal format may need to be approximated when converted to binary floating-point. For example, the decimal number 0.1 is not representable in binary floating-point of any finite precision; the exact binary representation would have a "1100" sequence continuing endlessly:

- e = −4; s = 1100110011001100110011001100110011...,

where, as previously, s is the significand and e is the exponent.

When rounded to 24 bits this becomes

- e = −27; s = 110011001100110011001101,

which is actually 0.100000001490116119384765625 in decimal.

As a further example, the real number π, represented in binary as an infinite series of bits is

- 11.0010010000111111011010101000100010000101101000110000100011010011...

but is

- 11.0010010000111111011011

when approximated by rounding to a precision of 24 bits.

In binary single-precision floating-point, this is represented as s = 110010010000111111011011 with e = −22. This has a decimal value of

- 3.1415927410125732421875,

whereas a more accurate approximation of the true value of π is

- 3.1415926535897932384626433832795...

The result of rounding differs from the true value by about 0.03 parts per million, and matches the decimal representation of π in the first 7 digits. The difference is the discretization error and is limited by the machine epsilon.

The arithmetical difference between two consecutive representable floating-point numbers which have the same exponent is called a Unit in the Last Place (ULP). For example, if there is no representable number lying between the representable numbers 1.45a70c22hex and 1.45a70c24hex, the ULP is 2×16-8, or 2-31. For numbers with an exponent of 0, a ULP is exactly 2−23 or about 10−7 in single precision, and about 10−16 in double precision. The mandated behavior of IEEE-compliant hardware is that the result be within one-half of a ULP.

Rounding modes

Rounding is used when the exact result of a floating-point operation (or a conversion to floating-point format) would need more digits than there are digits in the significand. There are several different rounding schemes (or rounding modes). Historically, truncation was the typical approach. Since the introduction of IEEE 754, the default method (round to nearest, ties to even, sometimes called Banker's Rounding) is more commonly used. This method rounds the ideal (infinitely precise) result of an arithmetic operation to the nearest representable value, and gives that representation as the result.[3] In the case of a tie, the value that would make the significand end in an even digit is chosen. The IEEE 754 standard requires the same rounding to be applied to all fundamental algebraic operations, including square root and conversions, when there is a numeric (non-NaN) result. It means that the results of IEEE 754 operations are completely determined in all bits of the result, except for the representation of NaNs. ("Library" functions such as cosine and log are not mandated.)

Alternative rounding options are also available. IEEE 754 specifies the following rounding modes:

- round to nearest, where ties round to the nearest even digit in the required position (the default and by far the most common mode)

- round to nearest, where ties round away from zero (optional for binary floating-point and commonly used in decimal)

- round up (toward +∞; negative results thus round toward zero)

- round down (toward −∞; negative results thus round away from zero)

- round toward zero (sometimes called "chop" mode; it is similar to the common behavior of float-to-integer conversions, which convert −3.9 to −3)

Alternative modes are useful when the amount of error being introduced must be bounded. Applications that require a bounded error are multi-precision floating-point, and interval arithmetic.

A further use of rounding is when a number is explicitly rounded to a certain number of decimal (or binary) places, as when rounding a result to euros and cents (two decimal places).

Floating-point arithmetic operations

For ease of presentation and understanding, decimal radix with 7 digit precision will be used in the examples, as in the IEEE 754 decimal32 format. The fundamental principles are the same in any radix or precision, except that normalization is optional (it does not affect the numerical value of the result). Here, s denotes the significand and e denotes the exponent.

Addition and subtraction

A simple method to add floating-point numbers is to first represent them with the same exponent. In the example below, the second number is shifted right by three digits, and we then proceed with the usual addition method:

123456.7 = 1.234567 * 10^5 101.7654 = 1.017654 * 10^2 = 0.001017654 * 10^5

Hence:

123456.7 + 101.7654 = (1.234567 * 10^5) + (1.017654 * 10^2)

= (1.234567 * 10^5) + (0.001017654 * 10^5)

= (1.234567 + 0.001017654) * 10^5

= 1.235584654 * 10^5

In detail:

e=5; s=1.234567 (123456.7) + e=2; s=1.017654 (101.7654)

e=5; s=1.234567 + e=5; s=0.001017654 (after shifting) -------------------- e=5; s=1.235584654 (true sum: 123558.4654)

This is the true result, the exact sum of the operands. It will be rounded to seven digits and then normalized if necessary. The final result is

e=5; s=1.235585 (final sum: 123558.5)

Note that the low 3 digits of the second operand (654) are essentially lost. This is round-off error. In extreme cases, the sum of two non-zero numbers may be equal to one of them:

e=5; s=1.234567 + e=-3; s=9.876543

e=5; s=1.234567 + e=5; s=0.00000009876543 (after shifting) ---------------------- e=5; s=1.23456709876543 (true sum) e=5; s=1.234567 (after rounding/normalization)

Another problem of loss of significance occurs when two close numbers are subtracted. In the following example e = 5; s = 1.234571 and e = 5; s = 1.234567 are representations of the rationals 123457.1467 and 123456.659.

e=5; s=1.234571 - e=5; s=1.234567 ---------------- e=5; s=0.000004 e=-1; s=4.000000 (after rounding/normalization)

The best representation of this difference is e = −1; s = 4.877000, which differs more than 20% from e = −1; s = 4.000000. In extreme cases, the final result may be zero even though an exact calculation may be several million. This cancellation illustrates the danger in assuming that all of the digits of a computed result are meaningful. Dealing with the consequences of these errors is a topic in numerical analysis; see also Accuracy problems.

Multiplication

To multiply, the significands are multiplied while the exponents are added, and the result is rounded and normalized.

e=3; s=4.734612 × e=5; s=5.417242 ----------------------- e=8; s=25.648538980104 (true product) e=8; s=25.64854 (after rounding) e=9; s=2.564854 (after normalization)

Division is done similarly, but is more complicated.

There are no cancellation or absorption problems with multiplication or division, though small errors may accumulate as operations are performed repeatedly [4]. In practice, the way these operations are carried out in digital logic can be quite complex (see Booth's multiplication algorithm and digital division).[5] For a fast, simple method, see the Horner method.

Dealing with exceptional cases

Floating-point computation in a computer can run into three kinds of problems:

- An operation can be mathematically illegal, such as division by zero.

- An operation can be legal in principle, but not supported by the specific format, for example, calculating the square root of −1 or the inverse sine of 2 (both of which result in complex numbers).

- An operation can be legal in principle, but the result can be impossible to represent in the specified format, because the exponent is too large or too small to encode in the exponent field. Such an event is called an overflow (exponent too large), underflow (exponent too small) or denormalization (precision loss).

Prior to the IEEE standard, such conditions usually caused the program to terminate, or triggered some kind of trap that the programmer might be able to catch. How this worked was system-dependent, meaning that floating-point programs were not portable.

The original IEEE 754 standard (from 1984) took a first step towards a standard way for the IEEE 754 based operations to record that an error occurred. Here we are ignoring trapping (optional in the 1984 version) and "alternate exception handling modes" (replacing trapping in the 2008 version, but still optional), and just looking at the required default method of handling exceptions according to IEEE 754. Arithmetic exceptions are (by default) required to be recorded in "sticky" error indicator bits. That they are "sticky" means that they are not reset by the next (arithmetic) operation, but stay set until explicitly reset. By default, an operation always returns a result according to specification without interrupting computation. For instance, 1/0 returns +∞, while also setting the divide-by-zero error bit.

The original IEEE 754 standard, however, failed to recommend operations to handle such sets of arithmetic error bits. So while these were implemented in hardware, initially programming language implementations did not automatically provide a means to access them (apart from assembler). Over time some programming language standards (e.g., C and Fortran) have been updated to specify methods to access and change status and error bits. The 2008 version of the IEEE 754 standard now specifies a few operations for accessing and handling the arithmetic error bits. The programming model is based on a single thread of execution and use of them by multiple threads has to be handled by a means outside of the standard.

IEEE 754 specifies five arithmetic errors that are to be recorded in "sticky bits" (by default; note that trapping and other alternatives are optional and, if provided, non-default).

- inexact, set if the rounded (and returned) value is different from the mathematically exact result of the operation.

- underflow, set if the rounded value is tiny (as specified in IEEE 754) and inexact (or maybe limited to if it has denormalisation loss, as per the 1984 version of IEEE 754), returning a subnormal value (including the zeros).

- overflow, set if the absolute value of the rounded value is too large to be represented (an infinity or maximal finite value is returned, depending on which rounding is used).

- divide-by-zero, set if the result is infinite given finite operands (returning an infinity, either +∞ or −∞).

- invalid, set if a real-valued result cannot be returned (like for sqrt(−1), or 0/0), returning a quiet NaN.

Accuracy problems

The fact that floating-point numbers cannot precisely represent all real numbers, and that floating-point operations cannot precisely represent true arithmetic operations, leads to many surprising situations. This is related to the finite precision with which computers generally represent numbers.

For example, the non-representability of 0.1 and 0.01 (in binary) means that the result of attempting to square 0.1 is neither 0.01 nor the representable number closest to it. In 24-bit (single precision) representation, 0.1 (decimal) was given previously as e = −4; s = 110011001100110011001101, which is

- 0.100000001490116119384765625 exactly.

Squaring this number gives

- 0.010000000298023226097399174250313080847263336181640625 exactly.

Squaring it with single-precision floating-point hardware (with rounding) gives

- 0.010000000707805156707763671875 exactly.

But the representable number closest to 0.01 is

- 0.009999999776482582092285156250 exactly.

Also, the non-representability of π (and π/2) means that an attempted computation of tan(π/2) will not yield a result of infinity, nor will it even overflow. It is simply not possible for standard floating-point hardware to attempt to compute tan(π/2), because π/2 cannot be represented exactly. This computation in C:

// Enough digits to be sure we get the correct approximation. double pi = 3.1415926535897932384626433832795; double z = tan(pi/2.0);

will give a result of 16331239353195370.0. In single precision (using the tanf function), the result will be −22877332.0.

By the same token, an attempted computation of sin(π) will not yield zero. The result will be (approximately) 0.1225 × 10−15 in double precision, or −0.8742 × 10−7 in single precision.[6]

While floating-point addition and multiplication are both commutative (a + b = b + a and a×b = b×a), they are not necessarily associative. That is, (a + b) + c is not necessarily equal to a + (b + c). Using 7-digit decimal arithmetic:

a = 1234.567, b = 45.67834, c = 0.0004

(a + b) + c:

1234.567 (a)

+ 45.67834 (b)

____________

1280.24534 rounds to 1280.245

1280.245 (a + b)

+ 0.0004 (c)

____________

1280.2454 rounds to 1280.245 <--- (a + b) + c

a + (b + c): 45.67834 (b) + 0.0004 (c) ____________ 45.67874

45.67874 (b + c)

+ 1234.567 (a)

____________

1280.24574 rounds to 1280.246 <--- a + (b + c)

They are also not necessarily distributive. That is, (a + b) ×c may not be the same as a×c + b×c:

1234.567 × 3.333333 = 4115.223

1.234567 × 3.333333 = 4.115223

4115.223 + 4.115223 = 4119.338

but

1234.567 + 1.234567 = 1235.802

1235.802 × 3.333333 = 4119.340

In addition to loss of significance, inability to represent numbers such as π and 0.1 exactly, and other slight inaccuracies, the following phenomena may occur:

- Cancellation: subtraction of nearly equal operands may cause extreme loss of accuracy. This is perhaps the most common and serious accuracy problem.

- Conversions to integer are not intuitive: converting (63.0/9.0) to integer yields 7, but converting (0.63/0.09) may yield 6. This is because conversions generally truncate rather than round. Floor and ceiling functions may produce answers which are off by one from the intuitively expected value.

- Limited exponent range: results might overflow yielding infinity, or underflow yielding a subnormal number or zero. In these cases precision will be lost.

- Testing for safe division is problematic: Checking that the divisor is not zero does not guarantee that a division will not overflow.

- Testing for equality is problematic. Two computational sequences that are mathematically equal may well produce different floating-point values. Programmers often perform comparisons within some tolerance (often a decimal constant, itself not accurately represented), but that doesn't necessarily make the problem go away.

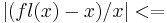

Machine precision

"Machine precision" is a quantity that characterizes the accuracy of a floating point system. It is also known as unit roundoff or machine epsilon. Usually denoted Emach, its value depends on the particular rounding being used.

With rounding to zero,

Emach = B^(1-P)

whereas rounding to nearest,

Emach = (1/2)*B^(1-P)

This is important since it bounds the relative error in representing any non-zero real number x within the normalized range of a floating point system:

Emach

Emach

Minimizing the effect of accuracy problems

Because of the issues noted above, naive use of floating-point arithmetic can lead to many problems. The creation of thoroughly robust floating-point software is a complicated undertaking, and a good understanding of numerical analysis is essential.

In addition to careful design of programs, careful handling by the compiler is required. Certain "optimizations" that compilers might make (for example, reordering operations) can work against the goals of well-behaved software. There is some controversy about the failings of compilers and language designs in this area. See the external references at the bottom of this article.

Binary floating-point arithmetic is at its best when it is simply being used to measure real-world quantities over a wide range of scales (such as the orbital period of Io or the mass of the proton), and at its worst when it is expected to model the interactions of quantities expressed as decimal strings that are expected to be exact. An example of the latter case is financial calculations. For this reason, financial software tends not to use a binary floating-point number representation.[7] The "decimal" data type of the C# programming language, and the IEEE 854 decimal floating-point standard, are designed to avoid the problems of binary floating-point representations when applied to human-entered exact decimal values, and make the arithmetic always behave as expected when numbers are printed in decimal.

Small errors in floating-point arithmetic can grow when mathematical algorithms perform operations an enormous number of times. A few examples are matrix inversion, eigenvector computation, and differential equation solving. These algorithms must be very carefully designed if they are to work well.

Expectations from mathematics may not be realised in the field of floating-point computation. For example, it is known that  , and that

, and that  . These facts cannot be counted on when the quantities involved are the result of floating-point computation.

. These facts cannot be counted on when the quantities involved are the result of floating-point computation.

A detailed treatment of the techniques for writing high-quality floating-point software is beyond the scope of this article, and the reader is referred to the references at the bottom of this article. Descriptions of a few simple techniques follow.

The use of the equality test (if (x==y) ...) is usually not recommended when expectations are based on results from pure mathematics. Such tests are sometimes replaced with "fuzzy" comparisons (if (abs(x-y) < epsilon) ...), where epsilon is sufficiently small and tailored to the application, such as 1.0E-13 - see machine epsilon). The wisdom of doing this varies greatly. It is often better to organize the code in such a way that such tests are unnecessary.

An awareness of when loss of significance can occur is useful. For example, if one is adding a very large number of numbers, the individual addends are very small compared with the sum. This can lead to loss of significance. Suppose, for example, that one needs to add many numbers, all approximately equal to 3. After 1000 of them have been added, the running sum is about 3000. A typical addition would then be something like

3253.671 + 3.141276 -------- 3256.812

The low 3 digits of the addends are effectively lost. The Kahan summation algorithm may be used to reduce the errors.

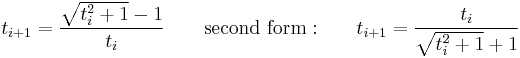

Computations may be rearranged in a way that is mathematically equivalent but less prone to error. As an example, Archimedes approximated π by calculating the perimeters of polygons inscribing and circumscribing a circle, starting with hexagons, and successively doubling the number of sides. The recurrence formula for the circumscribed polygon is:

Here is a computation using IEEE "double" (a significand with 53 bits of precision) arithmetic:

i 6 × 2i × ti, first form 6 × 2i × ti, second form

0 3.4641016151377543863 3.4641016151377543863 1 3.2153903091734710173 3.2153903091734723496 2 3.1596599420974940120 3.1596599420975006733 3 3.1460862151314012979 3.1460862151314352708 4 3.1427145996453136334 3.1427145996453689225 5 3.1418730499801259536 3.1418730499798241950 6 3.1416627470548084133 3.1416627470568494473 7 3.1416101765997805905 3.1416101766046906629 8 3.1415970343230776862 3.1415970343215275928 9 3.1415937488171150615 3.1415937487713536668 10 3.1415929278733740748 3.1415929273850979885 11 3.1415927256228504127 3.1415927220386148377 12 3.1415926717412858693 3.1415926707019992125 13 3.1415926189011456060 3.1415926578678454728 14 3.1415926717412858693 3.1415926546593073709 15 3.1415919358822321783 3.1415926538571730119 16 3.1415926717412858693 3.1415926536566394222 17 3.1415810075796233302 3.1415926536065061913 18 3.1415926717412858693 3.1415926535939728836 19 3.1414061547378810956 3.1415926535908393901 20 3.1405434924008406305 3.1415926535900560168 21 3.1400068646912273617 3.1415926535898608396 22 3.1349453756585929919 3.1415926535898122118 23 3.1400068646912273617 3.1415926535897995552 24 3.2245152435345525443 3.1415926535897968907 25 3.1415926535897962246 26 3.1415926535897962246 27 3.1415926535897962246 28 3.1415926535897962246 The true value is 3.141592653589793238462643383...

While the two forms of the recurrence formula are clearly equivalent, the first subtracts 1 from a number extremely close to 1, leading to huge cancellation errors. Note that, as the recurrence is applied repeatedly, the accuracy improves at first, but then it deteriorates. It never gets better than about 8 digits, even though 53-bit arithmetic should be capable of about 16 digits of precision. When the second form of the recurrence is used, the value converges to 15 digits of precision.

See also

- Computable number

- Decimal floating point

- double precision

- Fixed-point arithmetic

- FLOPS

- half precision

- IEEE 754 — Standard for Binary Floating-Point Arithmetic

- IBM Floating Point Architecture

- Microsoft Binary Format

- minifloat

- Q (number format) for constant resolution

- quad precision

- Significant digits

- single precision

- Gal's accurate tables

Notes and references

- ↑ Haohuan Fu, Oskar Mencer, Wayne Luk (June 2010). "Comparing Floating-point and Logarithmic Number Representations for Reconfigurable Acceleration". IEEE Conference on Field Programmable Technology. http://ieeexplore.ieee.org/xpl/freeabs_all.jsp?arnumber=4042464.

- ↑ openEXR

- ↑ Computer hardware doesn't necessarily compute the exact value; it simply has to produce the equivalent rounded result as though it had computed the infinitely precise result.

- ↑ Goldberg, David (1991). "What Every Computer Scientist Should Know About Floating-Point Arithmetic". ACM Computing Surveys 23: 5-48. doi:10.1145/103162.103163. http://docs.sun.com/source/806-3568/ncg_goldberg.html. Retrieved 2010-09-02.

- ↑ The enormous complexity of modern division algorithms once led to a famous error. An early version of the Intel Pentium chip was shipped with a division instruction that, on rare occasions, gave slightly incorrect results. Many computers had been shipped before the error was discovered. Until the defective computers were replaced, patched versions of compilers were developed that could avoid the failing cases. See Pentium FDIV bug.

- ↑ But an attempted computation of cos(π) yields −1 exactly. Since the derivative is nearly zero near π, the effect of the inaccuracy in the argument is far smaller than the spacing of the floating-point numbers around −1, and the rounded result is exact.

- ↑ General Decimal Arithmetic

Further reading

- What Every Computer Scientist Should Know About Floating-Point Arithmetic, by David Goldberg, published in the March, 1991 issue of Computing Surveys.

- Donald Knuth. The Art of Computer Programming, Volume 2: Seminumerical Algorithms, Third Edition. Addison-Wesley, 1997. ISBN 0-201-89684-2. Section 4.2: Floating Point Arithmetic, pp. 214–264.

- Press et al. Numerical Recipes in C++. The Art of Scientific Computing, ISBN 0-521-75033-4.

External links

- Kahan, William and Darcy, Joseph (2001). How Java’s floating-point hurts everyone everywhere. Retrieved September 5, 2003 from http://www.cs.berkeley.edu/~wkahan/JAVAhurt.pdf.

- Survey of Floating-Point Formats This page gives a very brief summary of floating-point formats that have been used over the years.

- The pitfalls of verifying floating-point computations, by David Monniaux, also printed in ACM Transactions on programming languages and systems (TOPLAS), May 2008: a compendium of non-intuitive behaviours of floating-point on popular architectures, with implications for program verification and testing

- [2] The www.opencores.org website contains open source floating point IP cores for the implementation of floating point operators in FPGA or ASIC devices. The project, double_fpu, contains verilog source code of a double precision floating point unit. The project, fpuvhdl, contains vhdl source code of a single precision floating point unit.

|

|||||||||||||||||||||||